The full article appears in issue 25 of Cloud – the in-flight magazine of private jet firm Air Charter Service. The online version can be found here.

The full article appears in issue 25 of Cloud – the in-flight magazine of private jet firm Air Charter Service. The online version can be found here.

Hot on the heels of the launch of the new Fender Acoustasonic Jazzmaster, Tim Shaw, the guitar maker’s Chief Engineer spoke to Audio Media International about the development of its latest hybrid guitar and how the team managed to combine the latest technology with Fender’s 75-year heritage…

What was the initial inspiration for the Fender Acoustasonic series?

Brian Swerdfeger [Fender’s Vice President for Research and Design], who’s been a friend of mine for a long time, had an idea for a guitar, which would be a hybrid – an electric acoustic that would do things that previous versions didn’t. There have been tonnes of these things over the years. But they hadn’t quite got it right yet.

So Brian came in initially as a consultant with an idea. He talked to a bunch of our high-level people including [former Fender Chief Product Strategist] Richard McDonald and our CEO Andy Mooney. And Andy basically threw out a challenge and said, ‘okay, what would Leo [Fender] have done if he was doing this?’. And we started on that basis. We wanted to create something that was a valid musical tool, but was also something where we could break new ground…

You can read the full article on Audio Media International (published on 25 March 2021).

As International Dark Sky Week approaches, Metro looks at how the latest stargazing tech can help you get through the final few months of lockdown

With lockdown making our Earth-based worlds feel much smaller, it’s no wonder people have been seeking solace in the stars. From constellations like Orion to the planet Mars, there’s a lot you can see with the naked eye. The latest game-changing, stargazing tech is making it even easier to get involved from the comfort of your own home, and as International Dark Sky Week hoves into view (it runs from April 5 to 12), now is the perfect time to prep your tech…

The full article appeared in the 26 February 2021 issue of Metro and can also be viewed in the e–edition.

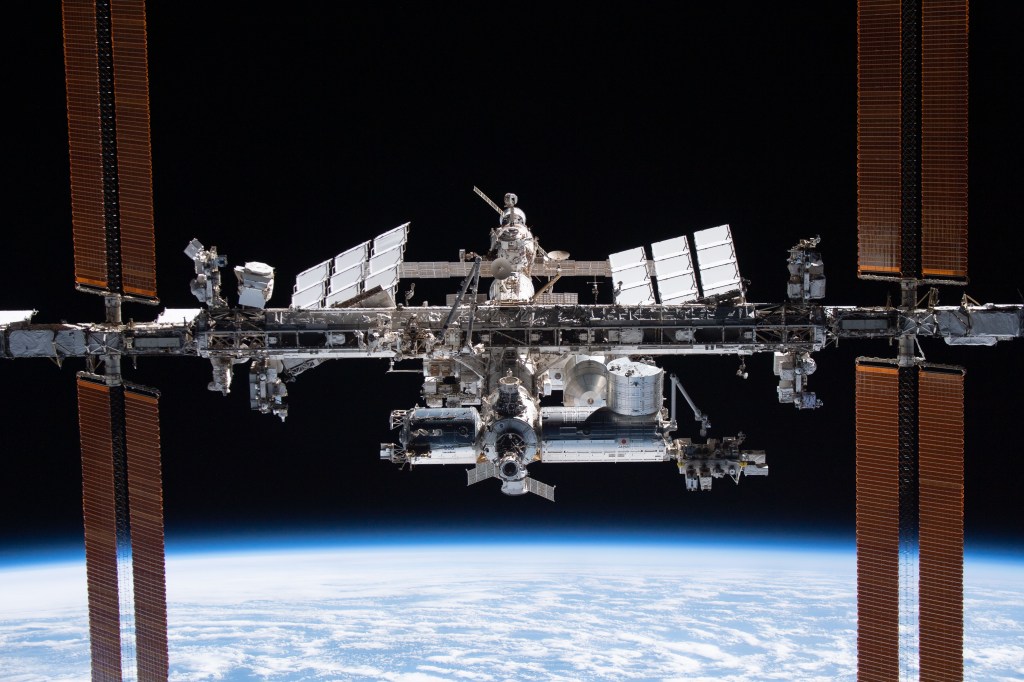

Two decades ago, on November 1, 2000, three humans left Earth for a new life in space. Since Then the International Space Station has been home to a rotating international crew of six astronauts. Metro finds out how they’ve survived

The ISS is the biggest human-made structure in space, measuring 358ft in length, which is about as long as a football pitch. A collaboration between Nasa, Russia’s Roscosmos, Japan’s Jaxa, the Canadian Space Agency (CSA) and the European Space Agency (ESA), the ISS essentially acts as a unique orbiting laboratory where astronauts test out new technologies and carry out scientific experiments in microgravity.

Around 250 to 300 scientific investigations are carried out at any given time and help prepare for future space missions — as well as helping us. For example, the first UK-led experiment on the ISS recently studied a horde of worms to test the effect of muscle loss in space. That could also help us to understand muscle loss in old age….

The full article appeared in the 30 October 2020 issue of Metro and can also be viewed in the e-edition.

UNLESS you’ve been living under an enormous rock or are a conspiracy theorist (in which case past form says you might get a punch in the face from Buzz Aldrin), you’ll know tomorrow marks the 50th anniversary of the Moon landing that made heroes out of the Apollo 11 crew — Neil Armstrong, Aldrin and Michael Collins’ mission was the first of six that saw astronauts leave footprints on the lunar surface.

Although truly revolutionary for its time, some of the technology that landed humans on the Moon was mind-bogglingly primitive by today’s standards. Even the smartphone in your pocket/handbag/manbag has thousands of times the processing power of the computer that guided the astronauts there.

In the 47 years since humans last set foot on lunar soil, tech has moved on — and in just a few years’ time, it could help us go back…

The full article appeared in the 19 July 2019 issue of Metro and can also be viewed in the e-edition.

The historic garment was painstakingly restored using light scanning and 3D mapping ahead of the 50th anniversary of the Apollo 11 mission launch

On 16 July 1969, three men flew to the moon. Their spacesuits have since been kept at the Smithsonian National Air and Space Museum in Washington DC, but nothing lasts forever. The suit Neil Armstrong was wearing when he became the first man on the Moon hasn’t been on display for 13 years – until the museum decided to launch a Kickstarter campaign in July 2015. Dubbed ‘Reboot the Suit,’ it has 9,477 backers and has managed to raise more than $700,000 (around £539,000).

On 16 July this year, exactly 50 years after the flight, freshly restored Armsrtrong’s suit will go back on public display. First on temporary display, it’ll later become the centrepiece of the museum’s upcoming Destination Moon exhibition, slated for launch in 2022.

Armstrong’s historic garment is among the most fragile items in the museum’s collection. So how did the Smithsonian go about preserving it for future generations?

You can read the full article at Wired UK (originally published 8 July 2019).

THE big vinyl comeback is no one-hit wonder. In 2018 sales of vinyl LPs rose for the 11th consecutive year to a whopping 4.2 million, according to music industry body BPI. To put that in perspective, just 205,000 LPs were sold when the format was floundering in 2007.

But while you’re listening to Arctic Monkeys’ retro-futurist triumph Tranquility Base Hotel & Casino — last year’s top seller out of the 12,000 LPs released on vinyl, incidentally — consider this: a new format will be amping up the analogue audio party when it hits shops at the end of the year. It’s called HD vinyl and it’s going to inject some big improvements into the audio quality of those polyvinyl chloride discs.

So with vinyl facing a next-gen upgrade and the dizzy rate at which new turntables are released, it’s no wonder that Record Store Day, which sees 200 UK independent record shops band together to celebrate and sell limited-edition releases, is back again this Saturday. Read on to make sure you’re ready for the latest high-def debate…

The full article appeared in the 12 April 2019 issue of Metro and can also be viewed in the e-edition.

The full article appears in issue 18 of Cloud – the in-flight magazine of private jet firm Air Charter Service. The online version can be found here.

The full article appears in issue 17 of Cloud – the in-flight magazine of private jet firm Air Charter Service. The online version can be found here.

Girlguiding has teamed up with the UK Space Agency and the Royal Astronomical Society to introduce a new Space badge for Brownies.

The new interest badge is designed to encourage the astronauts and scientists of the future by giving them the skills and confidence to engage in astronomy and space science.

Available to 200,000 girls aged seven to ten, the new badge is part of a wider move to update the activities available to Brownies and Girl Guides.

Some 800 new activities and badge challenges have been launched to replace more outdated subjects and introduce areas that are more relevant to the modern world…

You can read the full article on Mirror Online (published 24 August 2018).